I attended 15 presentations. 13 of these were more or less related to the topics I have experience with; two topics were very confusing to me, I justify this as I don’t have experience in risk management yet. In 12 of the studies out of the 13 that I understood well, people used one type of regression or another. Regression is a religion here. Below, I highlight five points as the main result of my participation.

1) The low quality of the studies

I provide a few examples. Dr. Fleten in his “The Reliability Pricing Mechanism and Coal-Fired Generators in PJM” doesn’t know whether they explored conventional power plants or combined heat and power plants; he doesn’t know if CHPs use coal as a fuel; he can’t say anything about coal-fired power plants “must run” status. Dr. Serafin in his “PCA forecast averaging – predicting day-ahead and intraday electricity prices” offers “draconic” regression. He puts enormous efforts into training and at the same time forgets an elegant and efficient cross-validation methodology. Dr. von Luckner in his “Hawkes process and market maker pricing on the German intraday electricity market” proves the efficiency of his trading strategy with profit-and-loss values skipping transaction costs. Indeed, without transaction costs, it looks much more convincing. Dr. Zormpas in his “Investing in flexible combined heat and power generation” forgets core features of combined heat and power plant operation: you can’t switch it off if there is a heat demand… And so on and so forth…

2) Trickery

I noticed long ago that there are two simple ways to prove the high efficiency of your scientific study.

- Gap between the theory and practice

In a paper, there is a large theoretical part. First, a long list of monstrous equations comes. Then, voilà, out of nowhere, the 1-2 plots with results are being thoroughly discussed. How did the author overcome the gap between the theory and the practice? How were the numerical results obtained? What software was used? What packages? It remains a mystery. This confusing combination of massive equations together with plots is considered highly scientific. A vivid example is Dr. Roncoroni with “A New Integrated Risk-Management Policy for the Newsvendor Position.” After 10 minutes of his presentation, the number of listeners fell from ten to five. By the way, Dr. Roncoroni loves chronicles his speeches, I have just one question: who are the listeners?

- Benchmark model selection

In a paper, an author offers a mathematical model – for example, a forecast model. As a rule, the author must prove the efficiency of the offered model. The recent common practice of that rule is as follows. First, the same author chooses a so-called benchmark model. Second, the new model easily beats (sometimes more than twice) the chosen benchmark model. After such “competition,” the offered model is considered very efficient. Neither in the papers nor at CEMA-2021 did I see that any researcher benchmarks against existing production solutions. A vivid example is Mr. (Dr.?) Uniejewski with “Regularized Quantile Regression Averaging for probabilistic electricity price forecasting.” These authors won’t come to Kaggle.com. They would be harshly trampled there.

3) Number of attendees

This was a matter of curiosity for me. The conference lasted for two days and consisted of 72 studies, 72 presenters and appointed discussants, and 174 accepted authors (one study might have up to five authors). There were four simultaneous sessions every day. I read all abstracts in advance and decided what I was going to listen to. From time to time, I opened parallel sessions in another window to note how many attendees were there. From such notes, I can say the following. On average, there were 8-10 people in every session. The maximum was reached on the first session of the first day, for Dr. Fleten’s “The Reliability Pricing Mechanism and Coal-Fired Generators in PJM” — 24 people; the minimum was at Dr. Roncoroni’s “A New Integrated Risk-Management Policy for the Newsvendor Position” — 5 people. At the general assembly on the last day, when the prizes found their owners, there were 25 people online. Thus, on average, there were 50-60 people online at any time; they came in and went away. People like me, who attended 15 presentations, were a minority. Please, note that CEMA aims to be the leading international event in the area.

4) Praising one another

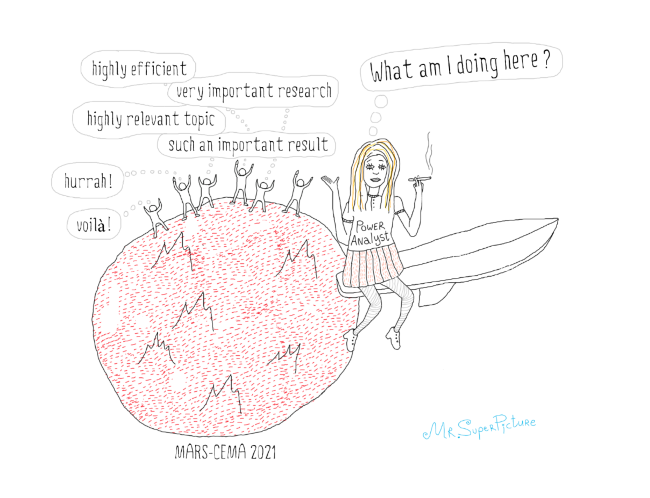

It’s impossible to skip this point as it consumed 30% of the conference time. The schedule was announced as follows — 30 minutes per topic: 20 minutes for the presentation, 5 minutes for the appointed discussant, 5 minutes for questions from the audience. During the 15 topics I listened to, the audience’s questions comprised a number of questions from me (what I was able or allowed to ask) and one question from the audience. In particular, after Dr. Kronies “The bigger, the better?” Amine Loutia, if I recall correctly her name, suggested that the author explore elasticity instead of prices. That’s it. No one asked anything else. Usually, after a presentation, the discussant talked. The majority of them repeated the topic in other words and there was a waterfall of phrases like “highly efficient,” “very important research,” “highly relevant topic,” and “such an important result.” The supreme case was Dr. Figuerola-Ferretti. She was choking with delight for 15 minutes straight. Moreover, it felt like she didn’t say half of what she intended to. Thus, the actual timing was as follows – 20-22 minutes for the presentation, 8-12 minutes for the discussant to talk and praise their colleague, 0-1 minute for me to try to kick the presenter’s ass (most of them deserved tough questions from a tough practitioner).

5) Outcome

The CEMA-2021 conference is a scientific swamp. The questions, blogs, and complaint I’ve submited would cause a slight gurgle on the surface of that swamp. To make this swamp move, you would need a bomb. I don’t have one. This scientific community doesn’t want to hear from practitioners, doesn’t want to benchmark against production solution, doesn’t want to study anything apart from regression (I have to admit they achieved significant results in “hammering in nails with a spade”), doesn’t want to hear about machine learning, etc. Additionally, they defend one another from invaders like me. During Dr. Marcjasz’s slide about training a simple three-layer feed-forward neural network for 33 years, I made a note in the session chat: “It’s not correct.” I was going to ask a tough question in the scheduled time. Dr. Detemple, the discussant, talked for 10 minutes straight and, foreseeing my intention, literally shut me down during questions from the audience time. It feels like this “scientific community” lives on the moon, no, even on Mars, because the Earth is closer to the moon and can be seen from it. Mars is further. I have the impression that these people are not willing to see the Earth…